The current state of moderation across various online communities, especially on platforms like Reddit, has been a topic of much debate and dissatisfaction. Users have voiced concerns over issues such as moderator rudeness, abuse, bias, and a failure to adhere to their own guidelines. Moreover, many communities suffer from a lack of active moderation, as moderators often disengage due to the overwhelming demands of what essentially amounts to an unpaid, full-time job. This has led to a reliance on automated moderation tools and restrictions on user actions, which can stifle community engagement and growth.

In light of these challenges, it’s time to explore alternative models of community moderation that can distribute responsibilities more equitably among users, reduce moderator burnout, and improve overall community health. One promising approach is the implementation of a trust level system, similar to that used by Discourse. Such a system rewards users for positive contributions and active participation by gradually increasing their privileges and responsibilities within the community. This not only incentivizes constructive behavior but also allows for a more organic and scalable form of moderation.

Key features of a trust level system include:

- Sandboxing New Users: Initially limiting the actions new users can take to prevent accidental harm to themselves or the community.

- Gradual Privilege Escalation: Allowing users to earn more rights over time, such as the ability to post pictures, edit wikis, or moderate discussions, based on their contributions and behavior.

- Federated Reputation: Considering the integration of federated reputation systems, where users can carry over their trust levels from one community to another, encouraging cross-community engagement and trust.

Implementing a trust level system could significantly alleviate the current strains on moderators and create a more welcoming and self-sustaining community environment. It encourages users to be more active and responsible members of their communities, knowing that their efforts will be recognized and rewarded. Moreover, it reduces the reliance on a small group of moderators, distributing moderation tasks across a wider base of engaged and trusted users.

For communities within the Fediverse, adopting a trust level system could mark a significant step forward in how we think about and manage online interactions. It offers a path toward more democratic and self-regulating communities, where moderation is not a burden shouldered by the few but a shared responsibility of the many.

As we continue to navigate the complexities of online community management, it’s clear that innovative approaches like trust level systems could hold the key to creating more inclusive, respectful, and engaging spaces for everyone.

Related

Interesting idea. But after thinking about it for a few minutes, i don’t think federated reputation would work for moderation privileges. Instances have their own rules, and i would not trust a hexbear mod to behave in line with lemmy.world rules and values. The same is true for communities really.

Would it still work for other privileges though?

Probably. On Reddit, some of it can managed at community (subreddit) level by bots automatically deleting posts or comments from recently joined people. Maybe a tiered system of mod privileges could work, where a junior mod can delete spam/offensive posts but not ban people. Mind you, banning people is not really effective in a fediverse where you can easily create new user accounts, on another instance.

Very interesting idea.

Immediate reaction is that the value of accounts listed for sale increase as their privileges increase. That’s not much different from the situation today where accounts are more valuable the older they are and the longer their post history. I would consider however the potential impact of a (highly) privileged account being turned over to a bad actor.

Great thoughts on a problem that deserves a solution!

PS: good news so far:

alt-text: Google search result showing “No results found for “buy lemmy account””

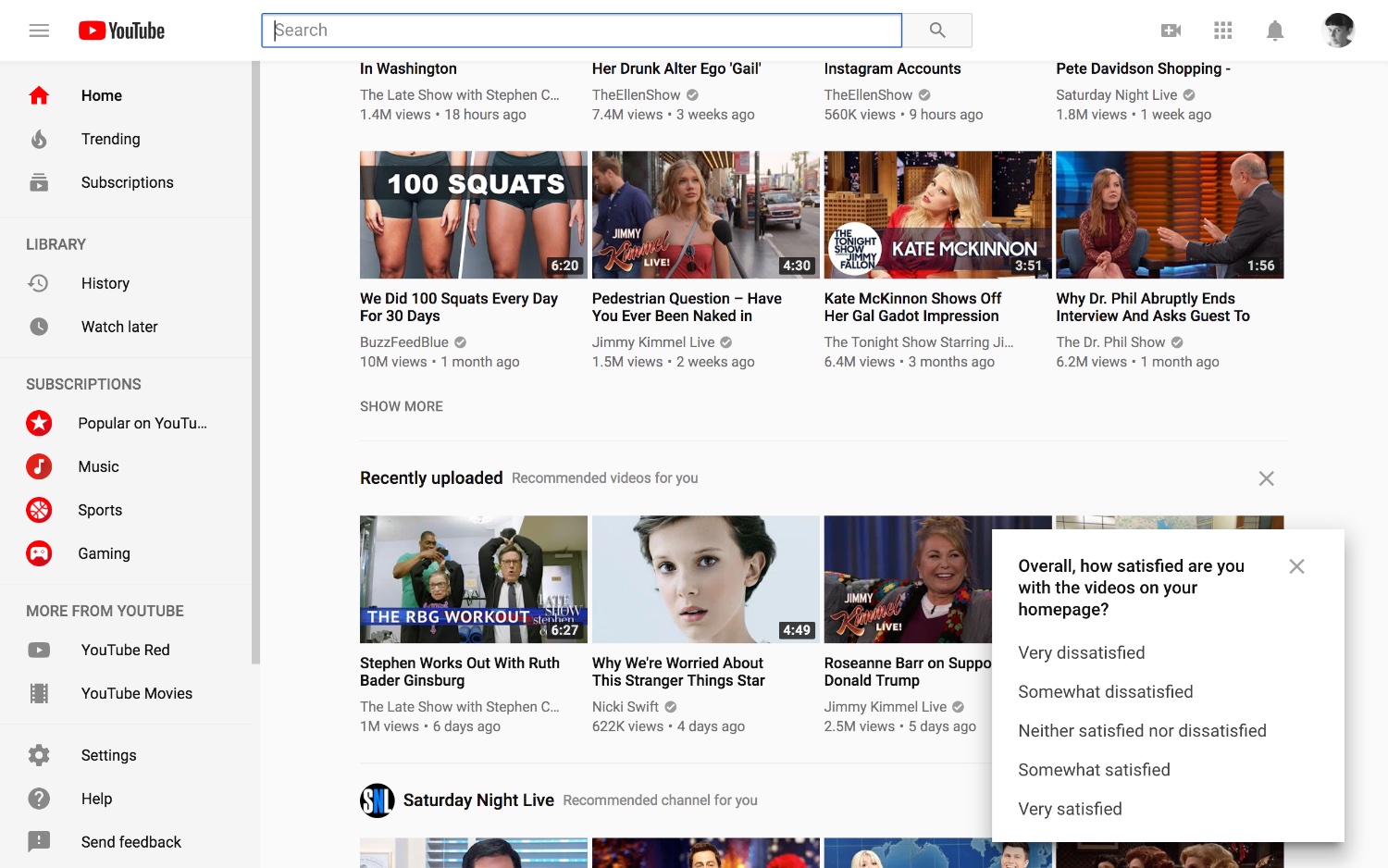

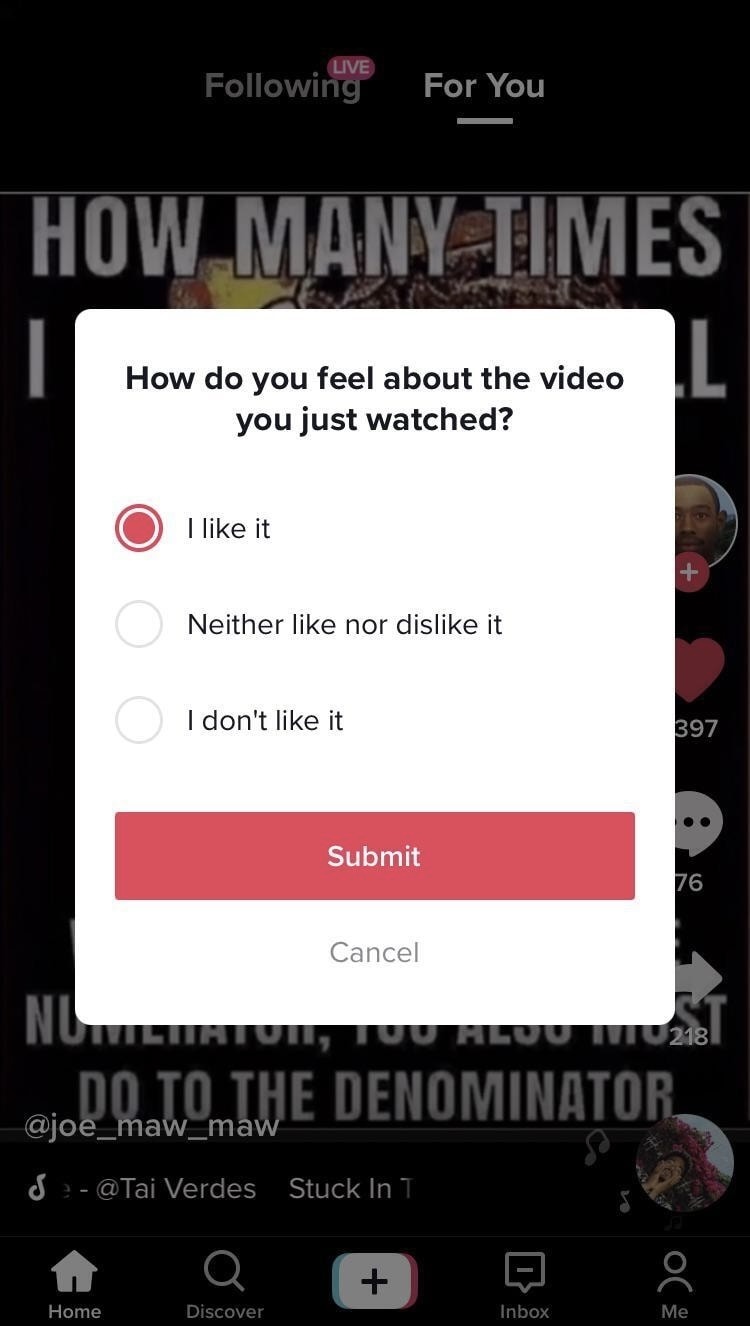

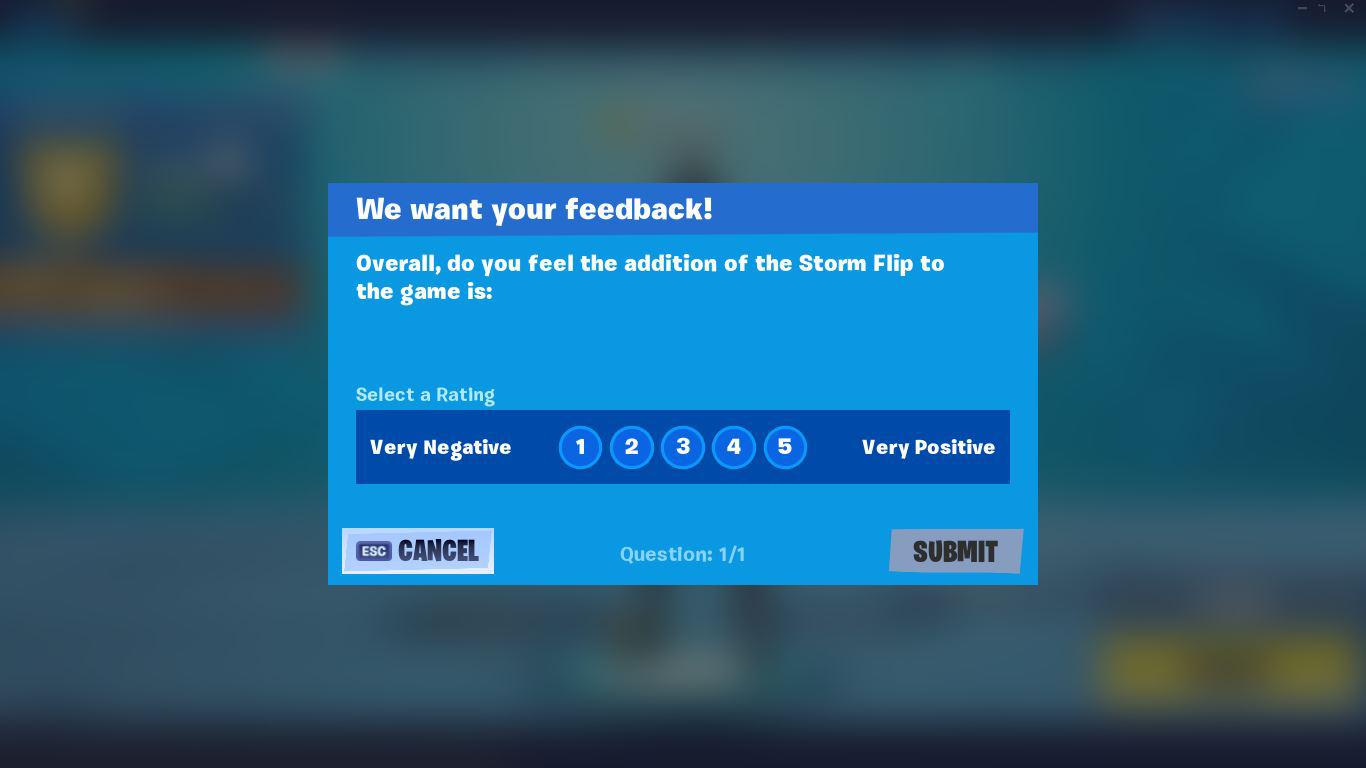

Got me thinking about the prompts that YouTube, TikTok, & Fortnite randomly give users.

Could help us understand how users feel about accounts overall or about reported comments/posts. Obviously can’t just do surveys for every comment though, they have to be somewhat rare.

alt-text: all 3 screenshots are similar; final is a prompt showing:

We want your feedback!

Overall, do you feel the addition of the Storm Flip to the game is:

Select a Rating

Very Negative 1 through 5 Very PositiveGetting quizzed about unimportant stuff is the most annoying trend. Anything like that needs an “I don’t want to give feedback” option at a minimum and an easy way to opt out of future feedback requests.

Yes I see the cancel button, but it isn’t the same as “I don’t want to give feedback” or “This is not important enough for me to care”.

Good point.

Can imagine the surveys being opt-in. Folks who do opt in will know they’re doing a service to the community.

BTW: when you see a survey from a big tech company, you can expect it’s used to influence your algorithm - I wonder if it has more impact on your experience than others’

The constant nagging about donations is another. My doctor’s office is in a health care organization that wants a survey after every single visit is another. Just constant nagging everything I spend money, and I know they don’t actually use the feedback for anything except punishing employees. There is no point to give feedback when it won’t be used to actually improve anything.

Now there is one organization I work with that does have 3rd party surveys that I know for a fact actually uses the feedback correctly. I jump at the chance to do those, and give it honestly and with comments!

I already give feedback here through upvotes and downvotes and user comments. Something about the UI might be something I would give feedback on, but things like moderation I would assume will either be ignored if it doesn’t reinforce existing practices or used negatively based on past experience.

That’s basically the upvote/downvote button.

There would definitely need to be a way to handle people switching instances, so that it doesn’t encourage sticking to an instance that goes downhill or if an instance goes belly up. The former has a chance to transfer their identity, the latter wouldn’t.

You will then have instances that abuse the system by allowing their users to quickly rank high, so just they can infiltrate other instances with high ranking accounts.

Doesn’t 0.19 already allow one click migration?

How would someone migrate from a dead instance?

Good point. Reminds me to keep a backup of my settings somewhere

This sounds a lot like what Stack Overflow does. And you know what people think about that community. It’s elitist and hostile towards newcomers and anyone who doesn’t know the convoluted rules for how to build their reputation.

If I want to interact with a community to ask a question or comment on something I found interesting but can’t because of rules that were made explicitly to keep newcomers from posting, I won’t stay and gain reputation. I leave.

Nobody says that about Discourse, perhaps they have implemented it better, and Discourse is the one I based the idea on.

Good luck. The Lemmy devs took out total votes on profiles so people cannot see in aggregate how communities have viewed individual users in some lofty goal to be neutral. You’ll have to bend their arms backward for them to reintroduce some sort of aggregate score/trust.

I don’t have any hope left for Lemmy in this regard, but hopefully, some other Fediverse projects, other than Misskey, will improve the moderation system. Reddit-style moderation is one of the biggest jokes on the Internet.

Lemmy.world might migrate from lemmy to sublinks once it’s ready from what I’ve heard. There are alternatives to lemmy

I’m aware; and as will I (I’m self hosting my own instance).

On a basic level, the idea of certain sandboxing, i.e image and link posting restrictions along with rate limits for new accounts and new instances is probably a good idea.

However, I do not think “super users” are a particularly good idea. I see it as preferrable that instances and communities handle their own moderation with the help of user reports - and some simple degree of automation.

An engaged user can already contribute to their community by joining the moderation team, and the mod view has made it significantly easier to have an overview of many smaller communities.

On a basic level, the idea of certain sandboxing, i.e image and link posting restrictions along with rate limits for new accounts and new instances is probably a good idea.

If there were any limits for new accounts, I’d prefer if the first level was pretty easy to achieve; otherwise, this is pretty much the same as Reddit, where you need to farm karma in order to participate in the subreddits you like.

However, I do not think “super users” are a particularly good idea. I see it as preferrable that instances and communities handle their own moderation with the help of user reports - and some simple degree of automation.

I don’t see anything wrong with users having privileges; what I find concerning is moderators who abuse their power. There should be an appeal process in place to address human bias and penalize moderators who misuse their authority. Removing their privileges could help mitigate issues related to potential troll moderators. Having trust levels can facilitate this process; otherwise, the burden of appeals would always fall on the admin. In my opinion, the admin should not have to moderate if they are unwilling; their role should primarily involve adjusting user trust levels to shape the platform according to their vision.

An engaged user can already contribute to their community by joining the moderation team, and the mod view has made it significantly easier to have an overview of many smaller communities.

Even with the ability to enlarge moderation teams, Reddit relies on automod bots too frequently and we are beginning to see that on Lemmy too. I never see that on Discourse.

Jimmy Wales (of Wikipedia fame) has been working on something like this for several years. Trust Cafe is supposed to gauge your trustworthiness based on other people who trust you, with a hand-picked team of top users monitoring the whole thing — sort of an enlightened dictatorship model. It’s still a tiny community and much of the tech has to be fleshed out more, but there are definitely people looking into this approach.

I think your idea is not necessarily wrong but it would be hard to get right, especially without making the entry into fediverse too painful for new (non-tech) people, I think that is still the number one pain point.

I have been thinking about moderation and spammers on fediverse lately too, these are some rough ideas I had:

- Ability to set stricter/different rate-limits for new accounts - users older less then X can do only A actions per N seconds [1] (with better explained rate-limit message on the frontend side)

- Some ability to not “fully” federate with too fresh instances (as a solution to note [1])

- Abuse reputation from modlog/modlog sharing/modlog distribution (not really federation) - this one is tricky, the theory is that if you get many moderation actions taken against you your “goodwill reputation” lowers (nothing to do with upvotes) and some instances could preemptively ban you/take mod action, either through automated means or (better) the mods of other instances would have some kind of (easy) access to this information so that they can employ it in their decision.

This has mostly nothing to do with bot spammers but instead with recurring problem makers/bad faith users etc.

Though this whole thing would require some kinds of trust chains between instances, not easy development-wise (this whole idea could range from built-in algorithms taking in information like instance age, user count, user age and so on, to some kind of manual instance trust grading by admins).

~

All this together, I wouldn’t be surprised if, in the future, there will eventually be some kinds of strata of instances, the free wild west with federate-to-any and the more closed in bubbles of instances (requiring some kind of entry process for other new instances).

[1] This does not solve the other problem with federation currently being block-list based instead of allow-list based (for good reasons).

One could write a few scripts/programs to simulate a federating instance and have tons of bots ready to go. While this exact scenario is probably not usual because most instances will defed. the domain the moment they detect bigger amount of spam, it could still be dangerous for the stability of servers - though I couldn’t confirm if the lemmy federation api has any kind of limits, can’t really imagine how that would be implemented if the federation traffic spikes a lot.(Also in theory one could have a shit-ton of domains and subdomains prepared and just send tons spam from these ? Unless there are some limits already, afaik the only way to protect from this would be to switch to allow-list based federation.)

Lot of assumptions here so tell me if I am wrong!

Edit: Also sorry for kind of piggy-backing on your post OP, wanted to get this ideas out here finallyI’ve wondered about instance bombing before, it seems like a low success but high impact vector

I had an idea for a system sort of like this to reduce moderator burden. The idea would be for each user to have a score based on their volume and ratio of correct reports to incorrect reports (determined by whether it ultimately resulted in a moderator action) of rule breaking comments/posts. Content is automatically removed if the cumulative scores of people who have reported it is high enough. Moderators can manually adjust the scores of users if needed, and undo community mod actions. More complex rules could be applied as needed for how scores are determined.

To address the possibility that such a system would be abused, I think the best solution would be secrecy. Just don’t let anyone know that this is how it works, or that there is a score attached to their account that could be gamed. Pretend it’s a new kind of automod or AI bot or something, and have a short time delay between the report that pushes it over the edge and the actual removal.

Functionality by obscurity does not work for a platform as open source and federated as lemmy

Just don’t let anyone know that this is how it works, or that there is a score attached to their account that could be gamed.

does not work for a platform as open source and federated as lemmy

Even if the system and scores were fully open and public, would there even be a way to game such a system? How would that be done?

Make a minimum viable instance to get federated.

Be normal for a while.

Boost bot users such that their scores are positive.

Use them for whatever mayhem you like

Could there be a way to protect against this? What if the scores were instance specific? If a user’s score is super high on one (or a few) instances and super low on the rest, that could suggest malicious activity.

I guess that’s somewhat true if you are sharing an implementation around, but even avoiding the feature being widely known could make a difference. Even if it was known, I think the scoring could work alright on its own. A malicious removal could be quickly reversed manually and all reporters scores zeroed.

Oh I’m not saying the feature couldn’t work, and I like the idea. I’m just saying it wouldn’t be possible to keep it a secret, for obvious reasons.

You could implement it and just tell client makers its not an intended data point to display or intentionally keep it less human readable (count in hex).

I don’t get it. It’s open source. Anybody can just look at the code. Unless you’re talking about a closed-source binary blob, in which case chances are nobody will ever adopt it.

Its just to stop honest people. Not everything has to be illegal to be limited.

I have zero trust in moderators here. They tone police when it suits their agendas and leave actual reading death threats up when it suits their agendas. I’ve done enough probing to realize I’m going to leave the fediverse when I find something reasonable. I need sanity in my mental spaces.

I’d advocate training an AI on removed posts and using that as moderator tool. The moderator starts to approve false positives until the AI is getting more and more precise. You could even gray out comments first, the AI sees as potentially harmful and have user vot them being viewable. While almost certain harmful will be collapsed and definitely harmful will be blocked until approved. That way it’s more gradually moderated and gives some power back to the users. Not sure how power and CPU intense this would be, maybe it could be shared between instances to load balance.

A motivational approach seams to be harmful to the Fediverse as it can be gamed and faked by bad actors and Lemmy instances are probably already larger than most discords. Discord is also pretty pointless with their unlock process, because 99% of the time, I’ve seen it be more of an obfuscation of where to find the “unlock” emoji to finally be able to chat. There’s no voice chat here, so all you could limit is functionality on a featureless platform. What are you going to do, remove the ability to post from new users? This already sucked on Reddit and was a bandaid at best. I remember grinding AskReddit with every new account to get over that pointless karma level to finally be able to participate in my old accounts communities.

I think in a few years using an AI for this kind of task will be much more efficient and simpler to set up. Right now I think it would fail too much.

So we are turning into full reddit now…

I very much doubt this kind of system would be implemented for Lemmy.

I think if this is implemented, it should be configurable for each community. On !perchance@lemmy.world for example, people posting there will often have just made a Lemmy account so they can ask for help. Not being able to post images would make this harder so they would probably just make a post in the old subreddit.

Same idea for local communities (ex. A university)

Sometimes the post is urgent, either for the user or as a PSA. I’d want to be able to configure how a system like this would work

This should be an overhaul of the moderation system in general. I find some communities have mods which ban based on disagreement alone regardless of any imposed rules.

I would like to see some kind of community review system on bans and their reasonings where the outcome can result in a punishment/demotion of the moderator who abused their power.

Yeah an appeal process to mitigate human bias would be nice.

I’ve been through that indeed, not the most pleasant experience

I think communities run by people who wish to mod them arbitrarily should be allowed to exist.

Good point but there has to be a balance somewhere.

If you have extremest moderators in charge of a community which is seemingly unbiased by its name can be misleading. Others will not create duplicate communities because one already exists with that name. And then those mods ban anyone who opposes their view creates a strong echo chamber and a unrealistic and unrepresentative community.

I’m mostly saying this because of moderators of noncredibledefense for example. They will ban people who state they oppose an all out war with Russia, which is madness. Yes it’s a shitpost community but there’s a line.